Latest Announcements

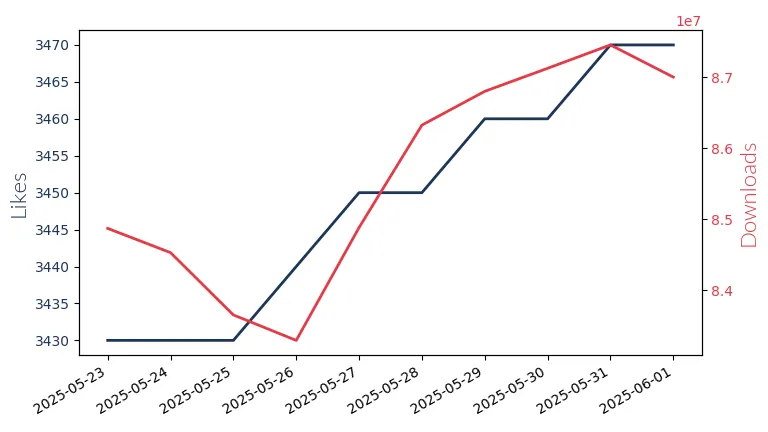

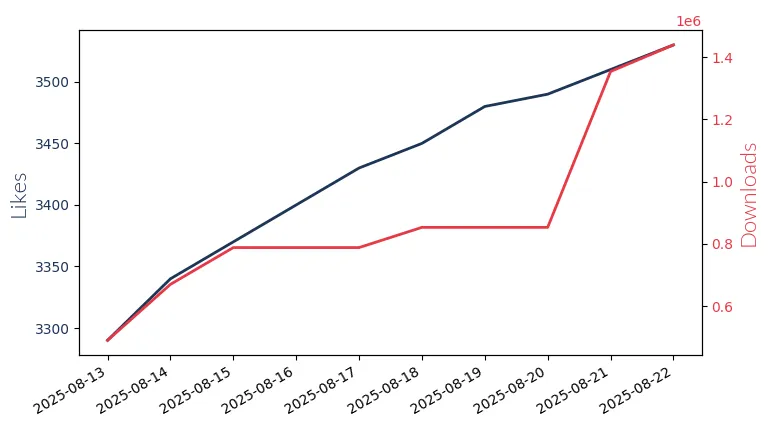

Deepseek OCR: Vision‑Language Compression Meets Dynamic OCR

Published on 2025-11-19More News

Trending Models

Deepseek R1 671B

Deepseek R1 671B: Bi-lingual Large Language Model with 671 billion parameters. Supports context lengths up to 128k for advanced code generation and debugging tasks.

Gpt Oss 20B

GPT-OSS 20B: OpenAI's 20 billion parameter model for low-latency, local applications. Mono-linguistic.

Llama3.2 3B Instruct

Llama3.2 3B Instruct by Meta Llama Enterprise: A multilingual, 3 billion parameter model with 8k to 128k context-length, designed for assistant-like chat apps.

Llama3.3 70B Instruct

Llama3.3 70B: Meta's 70 billion param, multi-lingual model for assistant-style chat, with a context window of 128k.

Qwen2.5 7B Instruct

Qwen2.5 7B Instruct: Alibaba's multilingual LLM with 7 billion params, supports context lengths up to 128k for long text generation.

All Minilm 22M

All-MiniLM 22M by Sentence Transformers, a compact model for efficient information retrieval.

All Minilm 33M

All-MiniLM-33M by Sentence Transformers: A compact, monolingual model for efficient information retrieval.

Gpt Oss 120B

GPT-OSS 120B by OpenAI: 120 billion param. model for complex reasoning tasks, supports English only.

Gemma3 4B Instruct

Gemma3 4B by Google: 4 billion params, 128k/32k context-length. Supports multiple languages for creative content generation, chatbot AI, text summarization, and image data extraction.

Llama3 Gradient 8B Instruct

Llama3 Gradient 8B by Meta Llama: An 8 billion parameter, English-focused LLM with an 8k context window, ideal for commercial & research use.

Bge M3 567M

Bge M3 567M by BAAI: A bi-lingual, 567M param model for efficient information retrieval with an 8k context window.

Gemma3 27B Instruct

Gemma3 27B: Google's 27 billion parameter LLM for creative content & comms. Supports context up to 128k tokens. Ideal for text gen, chatbots, summarization & image data extraction.